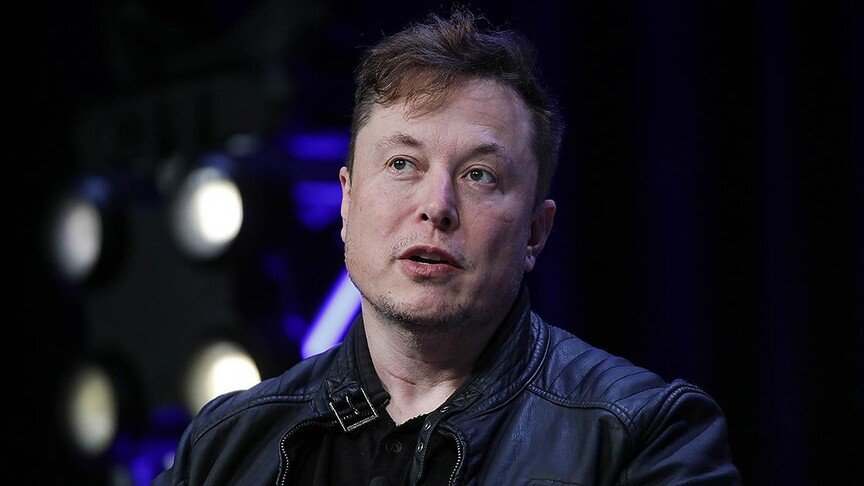

If it wasn’t already clear, Elon Musk and Sam Altman hate each other.

While the two men were once cofounders of OpenAI, they’re now locked in a vicious feud, playing out in all its theatrics in front of a judge and jury in a California courtroom. Musk is suing, alleging that Altman and OpenAI president Greg Brockman tricked him into forming and funding the organization as a non-profit before they subsequently restructured it to have a for-profit entity. OpenAI says Musk was well aware of those plans and frames the lawsuit as an attempt to derail a competitor.

I know this story all too well. I’ve been reporting on OpenAI since 2019, embedding within its office for three days shortly after Musk stepped away and Altman formally took up the CEO position. If there’s anything I’ve learned from my years of following this company and the AI industry, it’s that this world breeds bitter rivalries.

It’s not a coincidence that nearly all of OpenAI’s original founders left the company under acrimonious conditions, nor that every tech billionaire has a largely identical AI company. The frenetic AI race is inseparable from the petty, clashing egos of the unfathomably rich, hellbent on dominating one another.

Indeed, if Musk were to win his bid, that could be devastating for OpenAI, especially as it prepares this year for a potential initial public offering. Musk seeks $150bn in damages from the company and one of its top investors, Microsoft. He also seeks to return OpenAI to a non-profit, to remove Altman and Brockman as leaders of the for-profit, and to boot Altman off the non-profit board.

Yet, to assume that the future of AI development will be determined by a personality contest misses the point. Yes, Brockman’s diary entries are revealing, as was former OpenAI chief technology officer Mira Murati’s testimony about Altman pitting executives against each other, confirming my previous reporting.

But fixating on questions of whether Altman is untrustworthy, or whether Musk is even less so distracts from a far deeper problem. If OpenAI lost its footing as the AI industry frontrunner, another barely distinguishable competitor – Musk’s xAI or other – would simply replace it. That includes companies like Anthropic, who enjoy a better reputation yet engage in many similar behaviors compromising, like careful decision-making for speed, disregarding intellectual property, aggressively scaling their computing infrastructure to the detriment of communities.

Nothing about this trial or OpenAI’s financial structure will change the imperial drive of these companies to consolidate ever-more data and capital, terraform the earth, exhaust and displace labor, and embed themselves deep within the state to gain leverage over its apparatuses of violence. We would still exist in a world in which a tiny few have the profound power to cast it in their image and dictate how billions of people live.

As much as Silicon Valley would wish you to believe it, AI does not necessitate imperial conquest, nor could broad-based benefit from the technology ever emerge from such a foundation. Before the industry made a hard pivot into developing extraordinarily resource-intensive AI models, a full breadth of other types of AI flourished: small, specialized systems for detecting cancer, for reviving disappearing languages, for forecasting extreme weather events, for accelerating drug discovery. So, too, did ideas to develop new AI technologies, including those that didn’t need much data at all, and those that required only mobile devices, not vast supercomputers, to train.

Even now with large language models, an abundance of research and examples such as DeepSeek already show that different techniques can produce the same capabilities with a tiny fraction of the scale that AI companies use to justify their planet-consuming ambitions.

“Scaling is a cheap formula for getting more performance, but it’s also a highly imprecise formula.” Sara Hooker, the former vice-president of research at Canadian AI company Cohere, once told me. “We love it so much because it kind of fits predictable planning cycles. It’s easier to say ‘throw more compute at the problem’ than to design a new method.”

But these myriad paths wither in the empires’ shadow. In the first quarter of last year, nearly half of all venture money went to just two companies: OpenAI and Anthropic. That’s the tip of the iceberg to a yearslong capital consolidation that has hollowed out academia and starved research counter to, or simply out of step with, the corporate agenda. From 2004 to 2020, the percentage of AI PhD graduates who chose to join industry jumped from 21 to 70%, according to a study by MIT researchers in Science. And it’s not just the diversity in AI development that’s suffering. In 2024, funding for climate tech plunged 40% as investors redirected their dollars in part to the brute-force scaling of the AI empires.

It doesn’t have to be that way. And over the past year, as I’ve traveled to dozens of cities around the US and globally, I’ve seen this realization dawning. People everywhere are picking up the mantle of collective resistance. Most visible and vibrant have been the data center protests popping up in communities across geographies and political divides. In New Mexico, I met with residents eager to educate themselves about the AI industry over potluck, to demand transparency and accountability for local projects, such as a massive multi-billion dollar OpenAI supercomputing campus being proposed in the state as part of the company’s $500bn Stargate computing infrastructure buildout.

At a gathering in New York, I listened as KeShaun Pearson, a leader in the fight in Memphis, Tennessee, against Musk’s Colossus supercomputers, gave a heartfelt reminder of the toll that the facility’s dozens of methane gas turbines were having on his community. “Take two deep breaths,” he said to the audience. “That’s a human right” that was being taken from them. As of this month, Anthropic is using Colossus.

At the same event, Kitana Ananda, another community leader from Tucson, Arizona, mobilizing against Project Blue, an Amazon hyperscale AI facility, described the deep-seated feeling that she and her fellow residents shared: that they fought not just for their own community but for every community being steamrolled by the AI industry. And on a 114F day, as they packed into city hall in a show of force and watched the council vote 7-0 to pause the project in its existing form, they whooped and cried with the elation that their victory was every community’s victory.

Workers are also striking across sectors and countries: in northern California, more than 2,000 healthcare professionals at Kaiser Permanente walked out over the threat of AI being used to automate their work or degrade patient outcomes. In Kenya, data workers and content moderators contracted by AI companies to train and clean up their models are organizing to bring international attention to their exploitation and demand better working conditions.

In more than 30 countries, cultural workers from voice actors to screenwriters to manga illustrators are mobilizing to denounce issues ranging from the training on their work to the use of AI systems to rip their likeness or replace them, according to the Worker Mobilizations around AI database, a research effort led by the Creative Labour & Critical Futures group at the University of Toronto.

Educators and students are pressuring their institutions. Victims and their families are suing. Tech employees themselves are campaigning. Group chats for more organizing abound. People are marching.

The upwelling of collective pushback seems to be forcing the AI industry to downsize its ambitions. Already, more than $150bn worth of infrastructure projects were blocked or stalled in 2025, according to Data Center Watch, an effort tracking the opposition by AI research firm 10a Labs. Investors are taking note and beginning to discount their projections of how much AI companies can deliver on their promises.

OpenAI shuttered its video-generation app Sora, once lauded by company executives as one of its most important products and a new frontier in AI development. As the Wall Street Journal reported, Sora’s demise ultimately stemmed from several intersecting considerations shaped by grassroots action: flatlining usage, rocky public perception, tightening financials, and heavy constraints on computational resources.

Here’s the thing about empires. They don’t just seek to devour everything – they depend on it for their survival. In other words, the very thing that appears to give them paramount strength is their greatest vulnerability. When even a fraction of the resources they need are withheld, the giants begin to stumble. So if you’re wondering what will deliver real accountability to the AI industry and a different vision of the technology’s development, look beyond the billionaire mudfight. The real work is happening everywhere else.